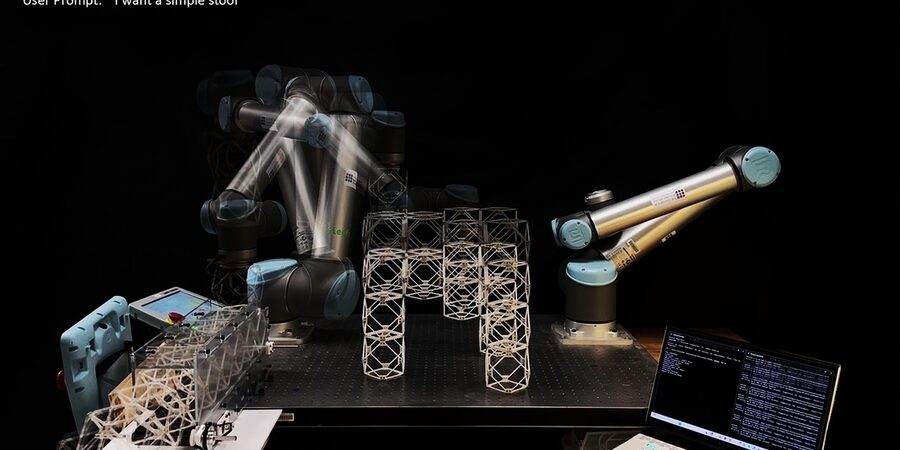

The speech-to-reality system combines 3D generative AI and robotic assembly to create objects on demand.

Generative AI and robotics are moving us ever closer to the day when we can ask for an object and have it created within a few minutes. In fact, MIT researchers have developed a speech-to-reality system, an AI-driven workflow that allows them to provide input to a robotic arm and “speak objects into existence,” creating things like furniture in as little as five minutes. With the speech-to-reality system, a robotic arm mounted on a table is able to receive spoken input from a human, such as “I want a simple stool,” and then construct the objects out of modular components. To date, the researchers have used the system to create stools, shelves, chairs, a small table, and even decorative items such as a dog statue. “We’re connecting natural language processing, 3D generative AI, and robotic assembly,” says Alexander Htet Kyaw, an MIT graduate student and Morningside Academy for Design (MAD) fellow. “These are rapidly advancing areas of research that haven’t been brought together before in a way that you can actually make physical objects just from a simple speech prompt.”

The idea started when Kyaw — a graduate student in the departments of Architecture and Electrical Engineering and Computer Science — took Professor Neil Gershenfeld’s course, “How to Make Almost Anything.” In that class, he built the speech-to-reality system. He continued working on the project at the MIT Center for Bits and Atoms (CBA), directed by Gershenfeld, collaborating with graduate students Se Hwan Jeon of the Department of Mechanical Engineering and Miana Smith of CBA.

The speech-to-reality system begins with speech recognition that processes the user’s request using a large language model, followed by 3D generative AI that creates a digital mesh representation of the object, and a voxelization algorithm that breaks down the 3D mesh into assembly components.

After that, geometric processing modifies the AI-generated assembly to account for fabrication and physical constraints associated with the real world, such as the number of components, overhangs, and connectivity of the geometry. This is followed by creation of a feasible assembly sequence and automated path planning for the robotic arm to assemble physical objects from user prompts.